Sadly, misinformation has become ubiquitous in modern society. Whether it’s politics, climate change, vaccination, or even the shape of our planet, we are bombarded with a dizzying flood of conflicting messages. How do we make sense of the information overload?

Sadly, misinformation has become ubiquitous in modern society. Whether it’s politics, climate change, vaccination, or even the shape of our planet, we are bombarded with a dizzying flood of conflicting messages. How do we make sense of the information overload?

This problem is even more challenging when we consider research finding that accurate scientific information can be cancelled out by misinformation. When people are confronted with two conflicting messages and no way to resolve the conflict, the risk is they disengage and believe neither. This means that our best efforts to teach science can potentially be undone by misinformation. Teaching scientific facts is necessary but insufficient.

Fortunately, there is an answer. If the problem is not being able to resolve the conflict between fact and myth, the solution is equipping people with the skills to resolve that conflict. Inoculation theory is a branch of psychological research that offers a way to achieve this. Just as exposing people to a weakened form of a virus builds up their immunity to the real virus, similarly, exposing people to a weakened form of misinformation builds up their “cognitive antibodies” so that when they encounter real misinformation, they’re less likely to be misled. We deliver misinformation in a weakened form by explaining the rhetorical techniques used to mislead, like explaining the sleight of hand in a magician’s trick.

Consequently, as well as teach the science of how climate works, it’s also important that we teach how the science can get distorted. Applying this approach in the classroom has many names — misconception-based instruction, agnotology-based learning, or refutational teaching — all representing the same style of teaching scientific concepts by explaining scientific misconceptions. This approach has been shown to be one of the most powerful ways of teaching science. It’s more engaging for students, it achieves stronger learning gains, and the lessons last longer in students’ minds. [Note: NCSE's climate change lesson sets take a misconceptions-based approach.]

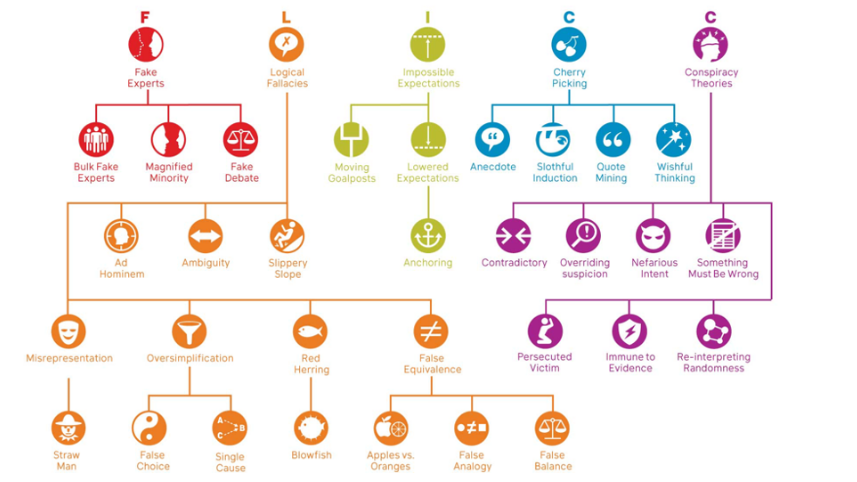

To provide a framework for explaining the misleading techniques used in misinformation, I developed the FLICC taxonomy. FLICC stands for the five techniques of misinformation: fake experts, logical fallacies, impossible expectations, cherry picking, and conspiracy theories. Of course, these five are just the tip of the iceberg sitting atop a large number of other rhetorical techniques, logical fallacies, and conspiratorial traits.

Sadly, misinformation has become ubiquitous in modern society. Whether it’s politics, climate change, vaccination, or even the shape of our planet, we are bombarded with a dizzying flood of conflicting messages. How do we make sense of the information overload?

Sadly, misinformation has become ubiquitous in modern society. Whether it’s politics, climate change, vaccination, or even the shape of our planet, we are bombarded with a dizzying flood of conflicting messages. How do we make sense of the information overload?